Nothing in nature grows forever. Why should artificial intelligence be any different?

There is a quiet belief behind almost every conversation about AI today: that it will keep getting better, faster, and bigger — forever. That next year’s models will always be a giant leap ahead. The curve only goes up.

But it shouldn’t be taken for granted. Because if you step back far enough, a familiar pattern shows up. One that biology figured out long ago. One that empires learned the hard way. One that economics has tracked for centuries.

Growth slows. Not because fuel runs out, but because constraints pile up.

What a Blue Whale Knows That Silicon Valley Doesn’t

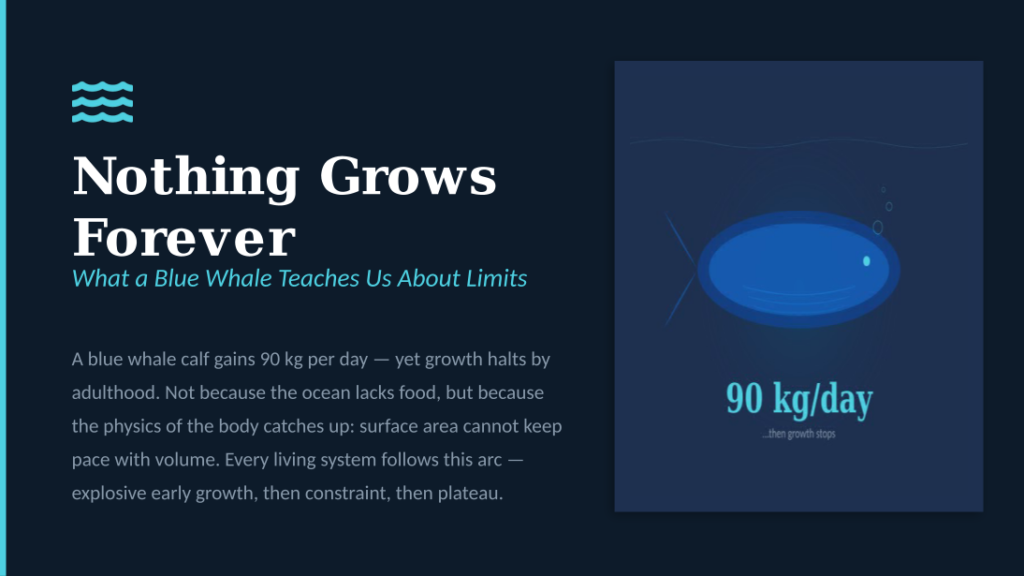

The blue whale is the largest animal that has ever lived. A calf can gain nearly 90 kilograms a day in its first months. That is astonishing growth.

Yet the blue whale does not grow forever. By adulthood, it stops almost completely.

Why? Not because the ocean runs out of food. Growth stops because the body’s own physics catches up. As an animal gets bigger, its volume grows faster than its surface area. It becomes harder to absorb oxygen, shed heat, and keep the whole system running. At some point, getting bigger would make the whale weaker, not stronger.

This is not just a whale problem. Bacteria in a lab dish do the same thing — they multiply fast, then slow down as food runs low and waste builds up. Ecologists call this the logistic growth curve. It looks like a stretched-out S. The lesson is always the same: fast early growth is real, but it is also temporary.

The constraints don’t arrive suddenly. They creep in quietly, until the system that once seemed unstoppable is spending most of its energy just keeping things going.

Empires That Could Not Expand Forever

History offers some of the most dramatic examples of this pattern.

The Roman Empire grew for centuries. At its peak around 117 AD, it stretched from Britain to Mesopotamia. But even Rome — with its roads, legions, and legal systems — hit limits. The bigger the empire became, the harder it was to defend its borders, collect taxes from distant provinces, and hold together populations that had little in common. The empire did not collapse because it ran out of ambition. It slowed because the cost of holding everything together grew faster than the benefits of adding more.

The British Empire followed a remarkably similar arc. By the early 1900s, it covered a quarter of the world’s land. But governing such a vast system required enormous resources — military, administrative, and financial. Two world wars drained those resources. Meanwhile, the people being governed began demanding independence. The empire did not shrink because Britain suddenly became weak. It shrank because the constraints of maintaining a global system outweighed the returns.

Every empire teaches the same lesson: expansion creates complexity, and complexity eventually becomes its own brake.

The pattern is not about failure. Rome’s roads, laws, and language shaped Western civilisation for millennia after the empire itself faded. Britain’s institutions, parliamentary systems, and trade networks endure today. The growth stopped, but the legacy remained. That distinction matters.

The Economy Already Learned This

Economics shows the same pattern in a different setting.

When the steam engine arrived, productivity jumped. One machine could do the work of dozens of people. Electricity multiplied output again. The first computers — spreadsheets, databases, automated logistics — created another leap. Each wave looked like the start of endless acceleration.

But each wave also ran into limits. The first tractor on a farm changes everything. The tenth adds almost nothing. Economists call this the law of diminishing returns. The idea is simple: the easy gains come first, and each additional push takes more effort for less reward.

Markets fill up. Productivity curves flatten. The car reshaped civilisation between 1910 and 1960. Since then, cars have improved — safer, more fuel-efficient, more comfortable — but the great disruption is long past.

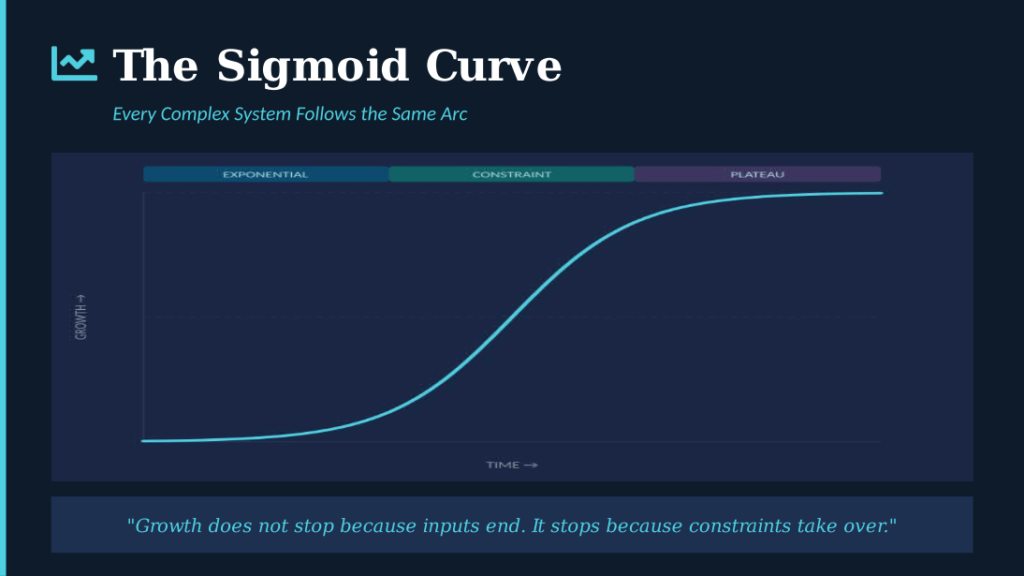

Growth does not stop because inputs end. It stops because constraints take over.

The S-Curve Is Not a Prediction. It Is a Pattern.

What biology, empires, and economics share is not a coincidence. It is a basic truth about how complex systems work.

The S-curve (sigmoid) is not something someone made up to be pessimistic. It is something people have observed again and again, in systems so different that the repetition itself is the point.

Every complex system goes through the same three phases. First, a fast rise — conditions are good, and limits are far away. Second, a transition — growth continues, but resistance builds. Third, a plateau — the system settles, and further progress comes from fine-tuning, not expanding.

The interesting question is never whether a system will follow this curve. It always does. The interesting question is: where on the curve are we right now?

Where AI Sits on the Curve

As of early 2026, AI is almost certainly still on the steep part of the climb. The progress of the last five years has been remarkable. Language models went from curiosity to everyday tools. Image generation went from toy to professional instrument. Capabilities that seemed a decade away showed up in months.

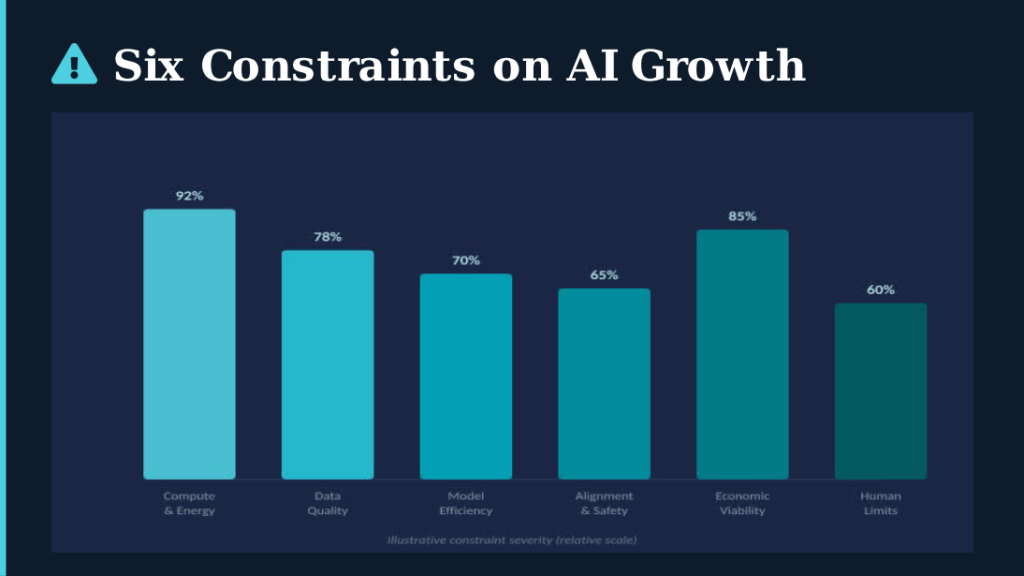

But the constraints are already showing. Not as a wall, but as friction — the kind that bends a curve before it flattens it.

Compute and energy are the most obvious limit. Training top models now needs data centres that use as much power as small cities. Each meaningful jump in ability requires not twice the computing power, but ten or a hundred times more. The energy systems to support that do not yet exist, and building them takes years.

Data quality is a quieter ceiling. The easy data — the internet’s publicly available text and images — has mostly been used up. What remains is either private, highly specialised, or not very useful. Training models on data generated by other models creates an intellectual echo chamber, where quality degrades over time.

Diminishing returns in model performance are already visible. Each new generation of top models costs far more to build but delivers smaller improvements. The jump from GPT-3 to GPT-4 was a transformation. The jumps since then have been more like refinements. This is the S-curve bending.

Safety and alignment add constraints that go beyond engineering. As models become more powerful, the risks of getting things wrong grow faster than the benefits of getting things right. Society will — and should — put guardrails in place. This is not a failure. It is what every powerful technology eventually faces.

Economic viability may be the most decisive limit. If a model costs ten billion dollars to build but is only slightly better than one that cost one billion, the business case collapses. Markets are good at correcting paths that don’t make financial sense.

Human and institutional limits may matter most of all. Organisations absorb new technology slowly. Governments take years to write regulations. People build trust at human speed, not machine speed. The real bottleneck for AI is increasingly not what models can do, but what people and institutions can actually use.

Adapting Across the Curve

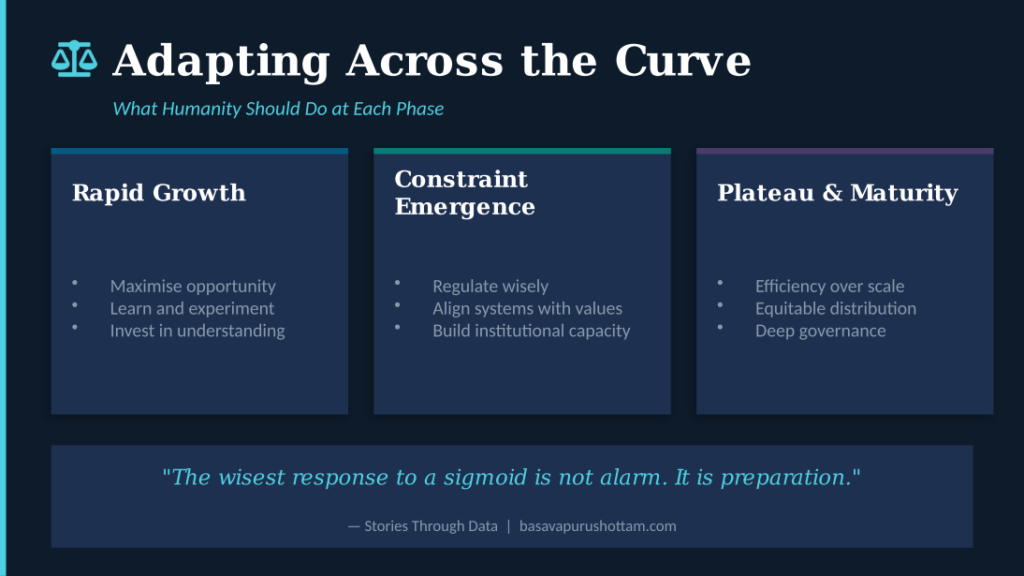

If the S-curve is inevitable, the smart response is not to fight it but to adapt at each stage.

During the current rapid growth phase, the priority is learning. People, companies, and governments that invest now in understanding AI — not just using it, but grasping how it works, where it breaks, and what it changes — will be best prepared for what comes next.

As constraints build — and they are building now — the focus should shift to regulation, safety, and building the capacity to govern wisely. The question moves from “how fast can we build?” to “how wisely can we use what we have?”

At the plateau, the game changes completely. Efficiency matters more than scale. Fair distribution matters more than competitive hoarding. The societies that do well will not be those with the biggest models but those that have learned to put AI to work where it matters most — in healthcare, education, agriculture, governance — and to share the benefits widely.

The Calm After the Curve

None of this means AI will fail. The car did not fail when its growth curve flattened. Electricity did not fail. The internet did not fail. The Roman road network did not fail — it is still traced in European highways two thousand years later. Each of these systems changed the world during its steep phase, then settled into a lasting role in daily life. AI will almost certainly do the same.

The danger is not that AI will stop growing. The danger is that we plan as though it never will — that we build expectations, policies, and institutions for permanent acceleration, and then find ourselves lost when the curve bends.

The wisest response to an S-curve is not an alarm. It is preparation.

Every complex system teaches the same lesson: the most useful phase is not the explosive beginning. It is the long, steady plateau that follows — where the real work of making things better, fairer, and more durable takes place. The blue whale does not mourn the end of its growth phase. It simply lives, fully formed, in the world whose growth made it possible. Rome’s greatest contribution was not its conquests but its laws.

AI will follow the same path. The question is whether we will be ready.